Hi, there!

Welcome to the 7th edition of Work in Beta.

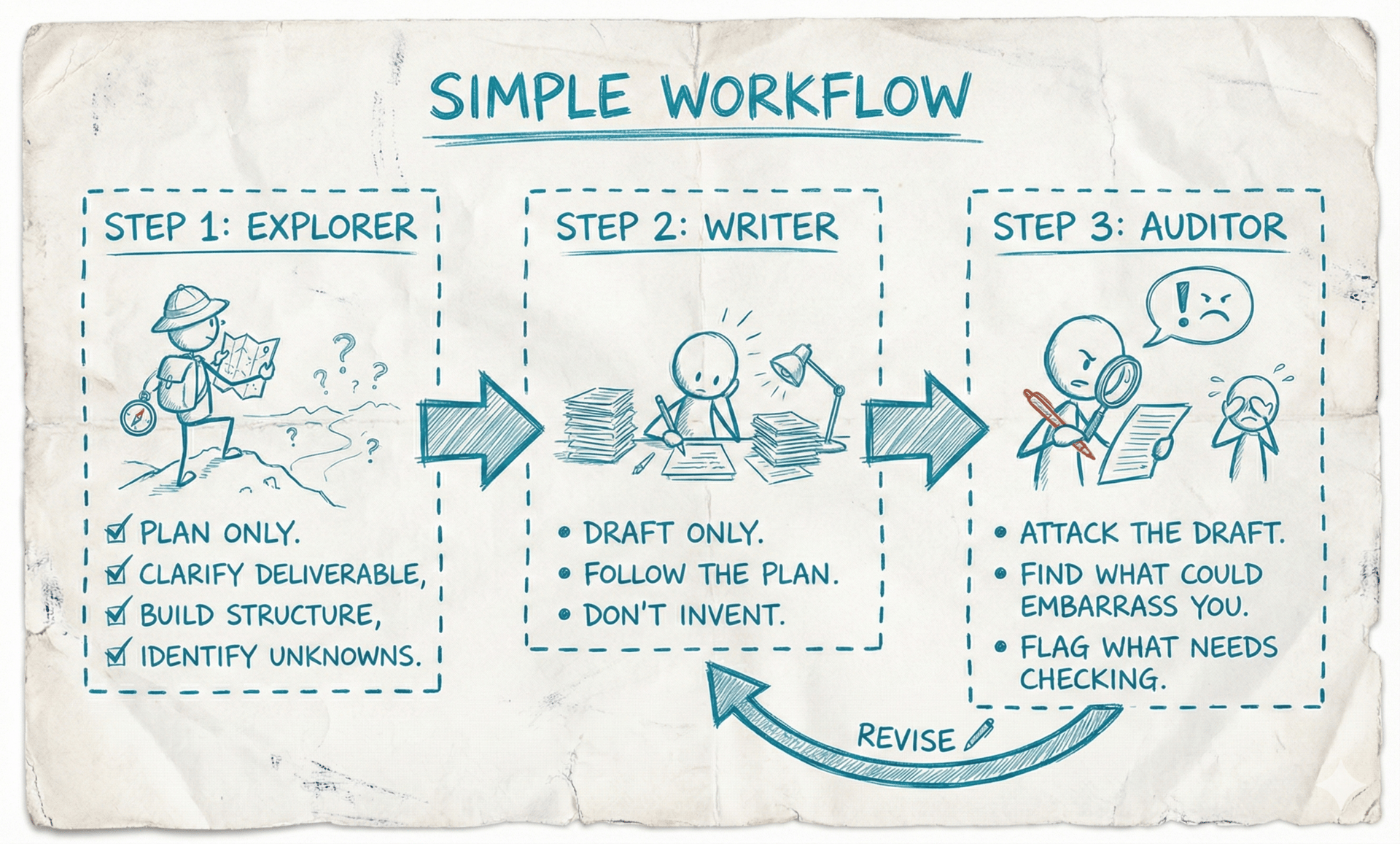

In this edition, we tell you how to ensure your AI-generated work is credible using a step-by-step workflow called the Three-Step Loop: ‘Explorer → Writer → Auditor’.

We are also hosting our second AI WORKSHOP for non-technical professionals later this month on 21st February and would love for you to join. More details towards the end.

Let’s dive in!

THE ‘HOW TO’ PLAYBOOK

How to Get AI Work Output That Survives Scrutiny

Image Credits: Work in Beta / Nano Banana Pro

We use AI for everything now. Client proposals. Updates. Analyses. This newsletter.

For months, our workflow was: one prompt, one draft, clean it up, send.

The output always looked professional. But it kept missing the mark - wrong angle, generic insights, claims we couldn't back up.

We were skipping two things. The pre-thinking - do we have clarity on what exactly this needs to be? And the post-check - can we actually defend what's in here?

One prompt skips both. AI jumps straight to drafting. It doesn't pause to validate direction. It doesn't circle back to verify claims. You get something polished and hollow.

The fix wasn't better prompts. It was breaking the work into three focused steps.

The Core Insight: You're Mixing 3 Jobs Into 1 Prompt

Every credible piece of work - a brief, an update, a proposal - typically requires three separate jobs:

Thinking/Structuring: What exactly is needed? What angle fits this audience? What's the right structure for this decision?

Drafting: Turn the plan into clean writing. No new ideas. No invention.

Checking: Can we defend every claim? What's verified vs. assumed?

When you blend all three into one prompt, AI doesn't know which job to prioritize. So it guesses structure, invents facts, and skips verification - all at once. The result: confident blur.

The shift: stop prompting harder. Start separating roles.

THE ‘HOW TO’ PLAYBOOK

The Method: Three Step Loop

The rules are simple:

Step 1 - Explorer: Plan only. Clarify the deliverable, build the structure, identify unknowns.

Step 2 - Writer: Draft only. Follow the plan. Don't invent.

Step 3 - Auditor: Attack the draft. Find what could embarrass you. Flag what needs checking.

Three Step Loop | Image Credits: Work in Beta / Nano Banana Pro

Each step has a single role. That's the entire system.

One more thing: do each step separately. If your tool has Projects (like ChatGPT), use the same project but open a new chat for each role. Don't run all three in one conversation - AI will blend roles and you lose the discipline.

Between chats, you carry context forward using a Context Capsule - a single document that Explorer produces, containing everything the next chat needs. Copy it, paste it. That's the entire handoff.

The Walkthrough: A Self-Appraisal

It's going to be review season soon. You will need to write your self-appraisal. Here's how we ran it.

Step 1: Explorer

In a new chat, we wrote the following prompt:

You are EXPLORER. Your job is to clarify and plan, not draft.

I am creating:

DELIVERABLE: Self-appraisal for AY 2025 performance review

AUDIENCE: My manager (and HR will read it)

PURPOSE / DECISION: Demonstrate impact against goals, support case for strong rating

CONSTRAINTS: Company template has 4 sections - Key Achievements, Goals Progress, Development Areas, Next Year Goals. Honest but confident tone. Under 800 words.

INPUTS I HAVE: My 3 OKRs for H2, project list, one client testimonial, no hard metrics for two projects (attached)

Step 1.1: Ask up to 5 clarifying questions. If none needed, say "No questions".

Do not draft the final deliverable.

Output of explorer step 1.1 in Gemini | Image Credits: Work in Beta

Explorer asks things like: "Which OKR had the strongest measurable result?" and "For the two projects without hard metrics, do you have qualitative signals - feedback, adoption, repeat requests?"

We answered. We riffed back and forth until we were aligned on what matters. We continued in the same chat thread with Step 1.2.

Answer to clarifying questions is given below. Evaluate and run step 1.2.

Clarifying question answers: [answer for step 1 here]

Step 1.2: Output a CONTEXT CAPSULE containing:

1. Deliverable

2. Audience

3. Purpose/Decision

4. Key points (max 5 bullets)

5. Outline (6-10 bullets, in the order it should appear)

6. Assumptions (max 5)

7. Open questions / [VERIFY] items

Output of explorer step 1.2 in Gemini | Image Credits: Work in Beta

This is the pre-thinking loop. Before a single word was drafted, we had clarity on which achievements to highlight, what evidence we had, and what gaps to flag honestly.

Step 2: Writer

We pasted the Context Capsule from Step 1 in the prompt below in a new chat:

You are WRITER. Your job is to draft the deliverable using the plan.

Here is the Context Capsule from Explorer:

[paste capsule]

Rules:

1) Follow the outline order.

2) Treat assumptions as given.

3) If a fact is in Open questions / [VERIFY], mark it as [VERIFY] in the draft.

4) Do not introduce new facts beyond the capsule.

Deliverable requirements:

- Match the audience and tone.

- Keep within the constraints.

- Make it skimmable.

Now draft the deliverable.

Then list:

- All [VERIFY] items in the draft

- 2 optional improvements (clarity/structure)

Output of writer in Gemini | Image Credits: Work in Beta

Writer produced a clean self-appraisal following the outline. Where it didn’t have confirmed information, it flagged [VERIFY] - like "[VERIFY: confirm 8-day onboarding time from project tracker]" instead of inventing a number.

THE ‘HOW TO’ PLAYBOOK

Step 3: Auditor

We pasted the draft from Step 2 in the prompt below in a new chat:

You are AUDITOR. Your job is to find what could embarrass me and fix it.

Here is the draft:

[paste draft]

Output:

1) Risky Claims Table: claim | why risky | risk (low/med/high) | what to verify with

2) Suggested Safer Rewrites: replace risky lines with defensible phrasing

3) Verification Checklist: the minimal set of checks I must do before submitting

4) Final "Ready to Submit?" verdict: yes/no + what blocks it

Output of auditor in Gemini | Image Credits: Work in Beta

Auditor flagged things like: "You say SOPs were 'adopted by 3 teams' - do you have evidence of adoption or just that you shared them?" It caught the difference between what we did and what we could prove, and suggested safer phrasing for anything unverified.

This is the post-check loop. Before you submit, you know exactly what's defensible and what isn't.

The Refinement Loop: Writer ↔ Auditor

Here's where it gets powerful.

After Auditor gave us suggestions, we went back to the same Writer chat - all our previous context was still there. We didn't need to re-explain anything. We just said:

I ran the draft you wrote through an auditor. Here are the suggestions. Tell me what changes should be made, and ask any clarifying questions if needed.

[output of auditor]Writer updated the draft based on Auditor's feedback. We then pasted the revised draft back into the same Auditor chat and asked it to review again. This time, it passed.

You can keep running this Writer ↔ Auditor loop until the output meets your standard. Each round gets tighter. The key: use the same chat threads for Writer and Auditor so context accumulates instead of resetting.

A note on the prompts above: You can repurpose the same prompts for client emails, project proposals, status reports, analyses. Change the DELIVERABLE, AUDIENCE, and PURPOSE in Step 1 and the rest follows.

Bonus: Polish your AI written draft to sound like a real person using our De-AI CustomGPT.

Mistakes We See Everyone Make

Mistake 1: Skipping Explorer

You go straight to "Write me a self-appraisal." AI guesses what achievements matter, guesses the angle, guesses the structure. You get a generic appraisal that sounds like it could belong to anyone.

Mistake 2: Letting Writer invent

Writer doesn't have a metric, so it fills the gap with something plausible. "Significantly improved onboarding efficiency" - sounds good, means nothing. If you can't put a number on it, mark it [VERIFY] and find the real data.

Mistake 3: Running all three jobs in one chat

Explorer starts drafting. Writer starts checking. Auditor adds new ideas. Everything blurs. That's the one-prompt problem all over again - just slower.

In Closing

The difference between AI work that survives scrutiny and AI work that doesn't isn't smarter prompts. It's separating the jobs.

Explorer thinks. Writer drafts. Auditor checks. Then Writer and Auditor loop until it's clean.

We've run this on client proposals, self-appraisals, status reports, analyses, and yes this newsletter. Same three steps every time.

Pick one deliverable you need to create this week. Run it through three steps. See what happens.

LEARN WITH US

Workshop on ‘Why AI Doesn’t Work for You (Yet)’

If you are a non-technical professional and want to use AI at work without worry and in complete control, we are running a 2-hour session on the topic, limited to a small-group.

Date: 21st February, 2026

Time: 2:00 pm to 4:00 pm IST

What you will learn:

how to work with AI for new tasks and existing one

how to stop AI from getting dumb in long chats

one universal system to communicate better with AI

one universal system to get work-ready output in your own voice

how to set up your personal LLM safely even for work

If you wish to join the session, fill this form and we will reach out to you with details: https://forms.gle/D6TGEVoFBUqKPsP49

Here is a testimonial from the session we ran last week.

Image Credits: Nikshubha Goswami’s Post / Linkedin

THE OUTRO

That is all for today.

Thanks for taking 10 minutes to read through this newsletter. Please do let us know what did you think of it especially on how useful was it for you.

What did you think of today's newsletter?

You can also reply to this newsletter email if you want to share something specific with us. Have a great week ahead and see you again next week!

-Sonali & PD