Hi, there!

Welcome to the 3rd edition of Work in Beta.

In this edition, we are going deep in one specific topic - Projects.

One of the most powerful (repeatable system rooted deep in context) but completely under-used feature of your AI.

So, let’s dive in!

THE ‘HOW-TO’ PLAYBOOK

How your AI keeps Forgetting (and, how I fixed it)

Image Credits: Nano Banana Pro / Work in Beta

Till early 2025, I had this conversation with ChatGPT at least 50 times:

“Remember, I am a Product Leader. When I ask for deep research, structure it as a product strategy. Format: problem-solution-reference. Keep it under 5 pages.”

Except it didn’t remember this even a single time.

Two days later, I was back at reminding the same thing. Again.

It took me a while but I eventually realized that it is a setup problem.

People treat AI as a fancy Google. They start a new chat everytime, explain their context all over again in a repeat loop. That is massive under-utilization of the tool.

Quality work with AI needs the following:

Consistent voice and format

Your work context loaded

Quality standards that don’t shift

Workflows you can repeat

Here’s what actually fixes the problem: Projects.

Not better prompts. Projects are persistent workspaces where the AI remembers your instructions, has read your files, and doesn’t make you start from scratch every single time.

Let me show you how this works.

What Projects Actually Are (and why most people set them up wrong)

A Project in ChatGPT or Claude has three persistent layers:

Custom Instructions - The AI’s permanent role and rules for this project

Knowledge Base - Files the AI can reference across all chats

Memory - What it learns and retains from your conversations

You need to set up and manage all the three layers properly to truly unlock its benefits. Most people who use projects don’t add any instructions or files, or they add vague or outdated details.

Then they wonder why does the output sucks.

Here’s the actual architecture that works from my tried and tested system perfected over the last 12 months.

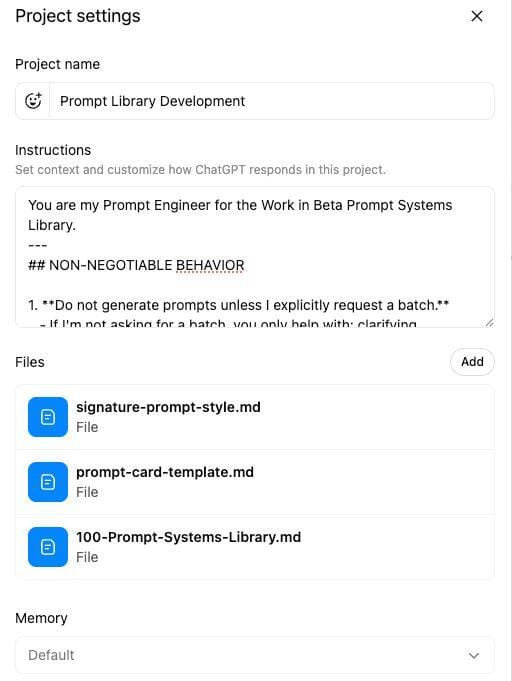

The 3-Layer Setup (How I Built My Prompt Library Project)

I use a lot of prompts for my regular work that take a considerable time for me to put together manually. I need to add context, instruction, templates that remain constant across the prompts I build. Copy pasting is a lot of time wasted and duplication of effort. I could do this inside a single chat, but it starts hallucinating as the context keeps growing.

So, after going through months of pain, I decided to build a ChatGPT project called ‘Prompt Library Builder’ that generates structured prompts on demand for 100 general purpose tasks in my voice. It took 15 minutes to set up. I’ve used it 60+ times since.

Here’s exactly what is in it:

Layer 1: Custom Instructions

This is where you define who the AI is in this workspace and how it behaves.

Mine looks something on the lines of this:

You are my Prompt Engineer for the Work in Beta Prompt Systems Library.

MANDATORY BEHAVIOR:

- Use the 4-part structure from signature-prompt-style.md

- Output format: prompt-card-template.md (exactly as shown)

- Tone: Conversational, not corporate. 'I do this...' not 'This enables...'

- No buzzwords like: 'leverage', 'optimize', 'comprehensive'

- Max 300 words per prompt

- Use placeholders: [Client], [Company], [Product] - never real names

If you cannot follow the structure, state why and wait for approval.

Why this works:

It’s specific (exact tone, format, constraints)

It’s enforceable (tells AI what to do if it can’t comply)

It eliminates back-and-forth (no ‘can you make it shorter?’ type edits)

Compare that to instructions like ‘Help me write prompts in my voice’ and you’ll see the difference immediately.

Layer 2: Knowledge Base aka Files (3 files attached)

I don’t paste context every time. The AI already has it:

File 1: signature-prompt-style.md

My 4-part framework for every prompt (Role, Task, Context, Output Format + Clarifying Questions ask)

File 2: prompt-card-template.md

The exact output format I want, keeping consistency of format intact

File 3: 100-Prompt-Systems-Library.md

Master catalog of all 100 prompts across 9 categories for general purpose work

These files ground the project in my system. The AI doesn’t guess what I want - it knows.

PS: I use Markdown files (.md) because it is the simplest code-block like format that LLMs can read clearly without losing context that could happen in rich text formats and makes it easy to store, edit, search and version.

Layer 3: Memory (what it learns over time)

After 60 uses, the project remembers:

I actually hate corporate jargon

Examples must use placeholders for privacy (even no example names)

If a prompt goes over 300 words, I want it split into two prompts

Quality bar: ‘works on first try when user provides context’

I didn’t teach it as an instruction. It learned from corrections across chats.

Prompt Library Development Instructions & Files screenshot

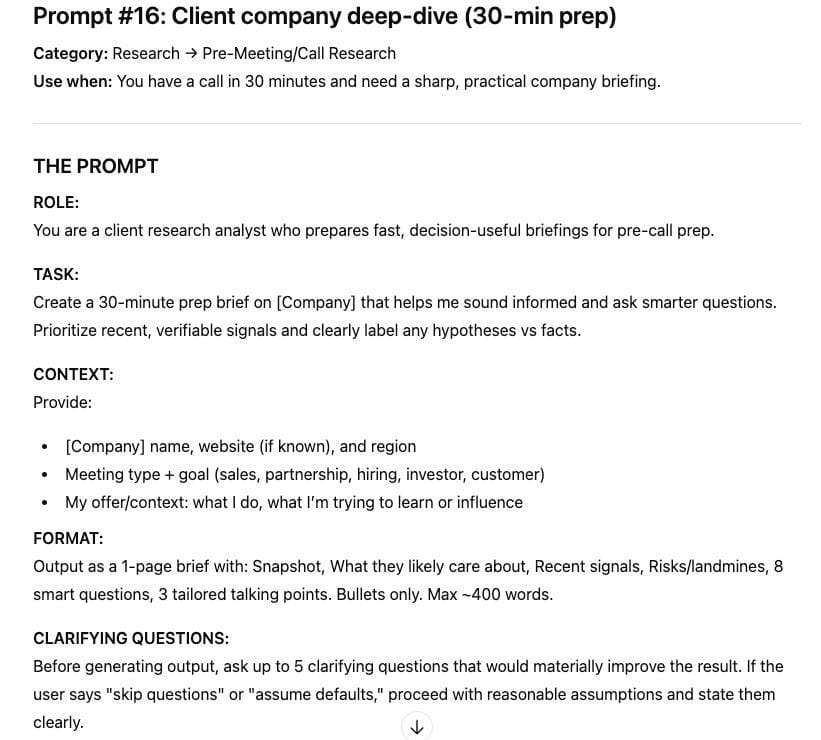

Output of Prompt when I just asked for Prompt #16

One Query, Zero Setup

Before projects, I was typing this every single time:

“I am a freelance consultant helping non-tech professionals learn AI. My audience is 35-50 years old, works in consulting/marketing/sales. Tone should be warm but direct, diagnostic style. No buzzwords. Give me 3 options…”

150+ words of setup. Every. Single. Time.

Here’s what I type now:

‘Generate a prompt for company deep dive for introductory client call’

What it outputs:

A complete and structured prompt with role, task, context, format, examples, and common mistakes. Ready to use. Zero edits needed.

That is because this is the workflow I want, this is the consistent output format I want and this fulfils my complete use-case with this project.

Before this project existed? I would have spent 20 minutes just getting one prompt after writing instructions, examples, formatting. Now it takes 2 minutes total for a high quality and complete prompt that is tuned in my voice.

That’s the ROI.

You set it up once. You use it forever.

What Goes in Custom Instructions (Template)

Here’s the structure that works across any project:

ROLE:

You are a [specific role] who [does what] for [who I am].

RULES:

- Tone: [conversational/formal/technical - pick one, describe in 3 words]

- Format: [bullets/prose/tables - be specific]

- Length: [word count or "concise"/"detailed"]

- Always: [your quality requirement]

- Never: [what to avoid]

CONTEXT:

[2-3 sentences on what this project is about]

OUTPUT FORMAT:

[Show what good looks like - paste 3-4 lines]

Real example from my Newsletter & Linkedin content project:

ROLE:

You are my content editor for "Work in Beta" - helping non-technical professionals build AI workflows through my newsletter and Linkedin profile.

RULES:

- Tone: Diagnostic, practical, no-BS. "I do this..." not "This enables..."

- Newsletter Format: Single HOW-TO section, 800-1500 words, structured with clear headers

- Linkedin Post Format: Simple complete WORKFLOWS & SYSTEMS, 2400 to 2700 characters, listed for easy viewing and reading

- Always: Use real examples from my work, not hypotheticals, verify factual correctness

- Never: Use buzzwords (leverage, optimize, synergies, transformative)

OUTPUT FORMAT:

[I paste 3 paragraphs from a past newsletter and 2 previous Linkedin posts I liked]

Takes 10 minutes to write. Saves 15 minutes per session forever.

The Files That Actually Matter

Don’t dump your entire Google Drive into a project. The AI won’t read it properly (or at all, depending on the platform).

Attach 2-5 high-value files:

For a Client Work project:

Client brief or onboarding doc

Past successful deliverable (as template)

Brand/style guide

For a Content Creation project:

2-3 examples of your best work

Audience research or ICP profile

Content calendar or topic list or content strategy

For a Coding project:

API documentation

Code style guide

Example functions/modules

What NOT to attach:

50-page PDFs the AI won’t read cover-to-cover

Outdated files (delete old versions immediately)

Anything with sensitive data you wouldn’t want leaked across chats

Keep it lean. The AI performs better with focused context than drowning in files.

The Setup Mistakes I See Everyone Make

Mistake 1: Vague instructions

“Be helpful and professional” tells the AI nothing.

Fix: Specify tone in 3 words, give format rules, show an example.

Mistake 2: Uploading files but not using them

In ChatGPT, files don’t auto-load into chats unless you attach them.

Fix: Either attach files per chat OR use Claude (auto-loads project files).

Mistake 3: One mega-chat that runs forever

After 50 back-and-forths, the AI forgets early context.

Fix: Start fresh chats for new sub-tasks, stay in same project.

Mistake 4: No memory hygiene

Old mistakes or assumptions linger and poison new outputs.

Fix: Keep a “Decisions and Standards” file you update when something changes.

Mistake 5: Treating projects like folders

Projects aren’t storage. They’re workspaces with memory.

Fix: Set them up like you would brief a colleague - give role, rules, resources.

When to Create a Project (vs Stay in Regular Chat Threads)

Not everything needs a project. Here’s my test:

✅ Create a project if:

You do this type of work repeatedly (client proposals, content creation, code reviews)

Context matters (your work / organization background, client details, brand voice)

You need consistent output quality (same format, tone, structure every time)

You are uploading files that you will reference multiple times

❌ Stay in main chat if:

One-off question (like - explain RAG like you would explain to a child)

No unique context needed

You won’t do this again or do this regularly

I have 6 most-active projects today:

Newsletter & Linkedin content creation

Client ‘A’ Work (type of project - one per client)

Prompt Library Development

Course Builder

AI Research and Updates

System and Workflow Builder

Each project has custom instructions + 2-5 core files. Each saves me 60-80% of setup time or trying to give enough context.

ChatGPT vs. Claude (which to use)

I use both. Here’s when:

Use Claude Projects when:

You have long documents (50+ pages)

You want files auto-loaded into every chat

You need high-quality, nuanced writing

You value accuracy over speed

Use ChatGPT Projects when:

You need fast iteration

You’re doing creative brainstorming

Shorter context is fine

You want voice input (including mobile app)

The gotcha:

In ChatGPT, uploaded files don’t automatically load into chats - you have to attach them manually or enable “Reference Chat History” in settings.

In Claude, project files are always in context (unless you hit the 200K token limit, then it switches to retrieval mode).

I keep my Course Builder and Newsletter & Linkedin content creation projects in Claude (need quality + long context).

I keep my Prompt Library project in ChatGPT (need speed + iteration).

Use the right tool for the job.

Your First Project (Do This Week)

Pick one workflow you repeat weekly.

Examples:

Client proposals

LinkedIn posts

Email responses to prospects

Code reviews

Meeting summaries

Then do this:

Step 1: Create the project (ChatGPT or Claude)

Step 2: Write custom instructions (use the template above, 10 minutes max)

Step 3: Attach 2-3 files (past examples, templates, or reference docs)

Step 4: Test it 3 times

Run your actual workflow

Note what’s wrong in the output

Adjust instructions accordingly

Step 5: Use it every time going forward

By Week 2, you’ll have a system that produces 80% finished work on first try.

That’s the goal: not perfection, but consistently good enough to ship with minor edits.

Hope this inspires you to actively start using Projects to manage all your work / projects that need consistent context or is repeated or references the same files.

THE OUTRO

Take the Next Step

Do you want to build your Personal AI Operating System?

One of the things that we have been able to build over the last two years is a detailed system of workflows and agents that do a lot of our work with us.

Imagine never having to start a strategy or requirements documents from blank, you work LLM being completely grounded in your work context, not having to rely on your memory or intuition to be reminded of your to-dos and tasks, doing 90% of your work by speaking to your laptop, calls translating automatically to relevant tasks and having files-folders, Google search, media creation, learning all driven and managed by LLMs.

That is exactly what we have built for ourselves, and we are in the process of signing up the first 5 leaders for our 1:1 consulting where we will work directly with them to build this system. We have 2 slots open for our Feb’26 starts - so let us know if this is something you want to do with us by writing to us at: priyadeepsinha(at)gmail(dot)com

What did you think of today’s newsletter?

You can also reply to this newsletter email if you want to share something specific with us. Have a great week ahead and see you again next week!

See you next week again.

PD & Sonali

Work in Beta