Hi, there!

Welcome to the 19th edition of Work in Beta.

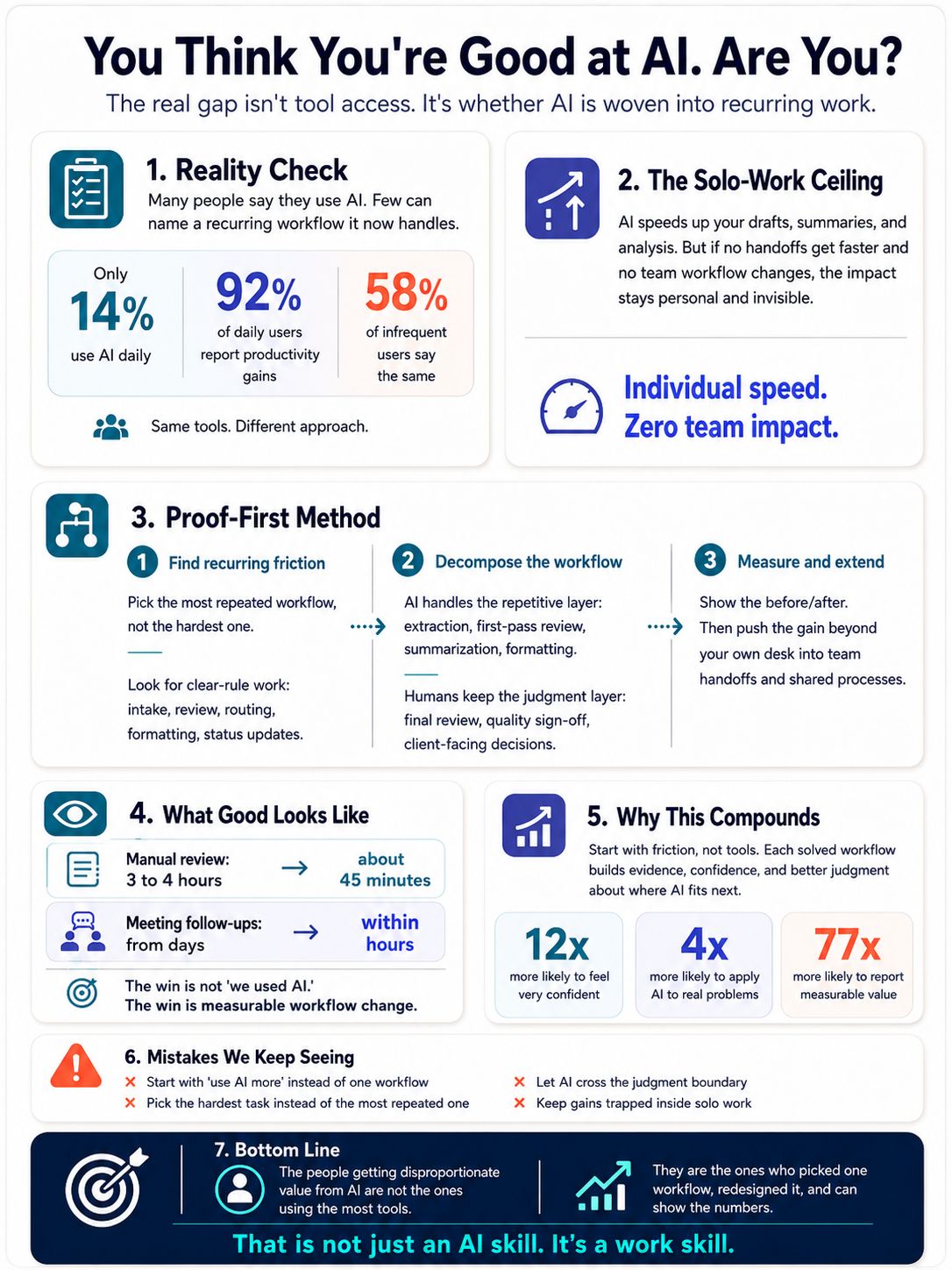

In this edition, we break down what separates the people getting real results from AI from the ones who are just "using" it and give you a 3-step method to close that gap this week.

We're planning a Claude Code workshop soon. Hands-on, practical, built for people who want to actually work differently with AI, not just watch someone else do it. Drop your details here to get on the waitlist.

Let's dive in!

IF YOU ONLY HAVE 2 MINUTES

Image Credits: ChatGPT / Work in Beta

THE ‘HOW TO’ PLAYBOOK

You Think You're Good at AI. Are You?

Something we keep seeing in our workshops: everyone says they are "using AI." But ask them what recurring work they've pointed AI at - what it handles now, what it used to cost them in hours - and the room goes quiet.

The gap isn't on the output side. People aren't failing to show results. The gap is on the input side: they're not looking at the work they repeat every week and asking, "How much of this can AI chip away at?"

They're treating AI as something they visit when a task comes up, not something woven into the work itself. A search engine. One query, one answer, move on.

PwC's 2025 Workforce Survey puts a number on this: only 14% of workers use AI daily. But those daily users report dramatically different outcomes - 92% say it improves their productivity, compared to 58% of infrequent users. Same tools. Same access. Completely different approach.

The difference isn't the tool. It's where you point it.

The Solo-Work Ceiling

Here's where most AI usage lives today: you use it to speed up your own tasks. A better first draft. A faster summary. A quicker analysis. The output stays with you.

No handoffs get faster. No team workflows change. No coordination costs drop. Your growth is capped at what you can do yourself.

We call this the solo-work ceiling - individual speed, zero team impact. Invisible to everyone except you.

Breaking through it requires a different approach. Not "use AI more." Not "learn more tools." Instead: pick one recurring workflow, redesign it with AI in the right places, measure what changed, and extend it past your own desk.

That's The Proof-First Method.

Step 1: Find the Recurring Friction

Not the hardest task on your plate. The most repeated one.

The best AI starting points are your highest-volume, most repeatable workflows with clear rules - the ones that follow the same steps every time but still eat hours. Think: intake, review, routing, summarization, status updates, data formatting.

Anthropic's Economic Index supports this: 57% of real-world AI use is augmentation, not automation. The biggest wins aren't about handing whole jobs to AI. They're about finding the repetitive layer inside work you already do and letting AI handle that layer.

We see this in our own work. The workflows that gave us the biggest returns weren't the complex, high-judgment tasks. They were the ones we repeated every week - content reviews, client prep summaries, workshop follow-ups - that followed the same steps but still ate 2-3 hours each time.

The question to ask: "What do we do every week that follows the same steps but still eats hours?"

Step 2: Decompose the Workflow

Once you've picked the workflow, split it into two layers:

The repetitive layer: first-pass reviews, formatting, extraction, summarization, data entry. AI handles this.

The judgment layer: final review, client-facing decisions, quality sign-off, nuance. Humans hold this.

A 12-lawyer boutique firm applied this split to NDA review. AI runs the first pass: review time dropped from 2 hours to 25 minutes. Missed-clause rates fell from 4.2% to 0.8%. But lawyer review stayed as the final quality gate. Automation on the repetitive layer, judgment on the human layer.

One important boundary: the Harvard/BCG field experiment found that AI-assisted consultants completed 12.2% more tasks, 25.1% faster, and produced 40% higher quality - but only inside the boundary of what the model could actually handle well. Outside that boundary, performance degraded. Know where the line is. AI on the repetitive layer. You on the judgment layer.

Step 3: Measure the Before/After - Then Extend It Beyond You

Sheila Head, Head of Marketing Operations at Asana, used an AI teammate to review post-offsite marketing projects for completeness - milestones, owners, context, deadlines. A manual review that took 3-4 hours dropped to about 45 minutes after roughly 10 minutes of setup and iterative feedback.

That's the proof: not "we used AI" but "this workflow used to take 3-4 hours and now takes 45 minutes."

But the real shift is extending past solo work. When Morgan Stanley's advisors started using AI for meeting notes and follow-ups, the individual time savings were clear - roughly 30 minutes per meeting. But the bigger change was what happened downstream: follow-ups that used to take days started reaching clients within hours. That's not personal productivity. That's team-level coordination getting faster. The impact becomes visible to people beyond you.

The NBER study of roughly 5,000 customer support agents found the same pattern at scale: AI guidance increased issues resolved per hour by 13.8%. More striking - the less experienced users closed the performance gap with the best performers faster, which meant the whole team's floor rose, not just the ceiling. When everyone around you gets faster at the repeatable layer, the team's capacity grows.

Why This Works And Why It Compounds

This method works because it inverts how most people approach AI. Instead of starting with the tool and looking for tasks, you start with the friction and redesign around it. That means every workflow you fix teaches you something about where AI fits and where it doesn't. We covered the infrastructure side of this in Edition 16 (Personal AI OS). This edition is about what you point that infrastructure at.

Degreed and Harvard Business Publishing put numbers on the compounding: workers who weave AI into daily work are 12x more likely to feel very confident using it, 4x more likely to apply it to real problems, and 77x more likely to report measurable value.

Each solved workflow builds three things: evidence you can show, confidence in where AI fits, and better judgment about where to point it next. That's a compounding loop, not a one-time hack.

The Mistakes We See People Make

Starting with "use AI more" instead of one recurring workflow. Vague mandates produce vague results. The people who get real traction pick one specific, repeatable piece of work and redesign it. Everything else follows from there.

Picking the hardest task instead of the most repeated one. Hard tasks have ambiguity, edge cases, and judgment calls - exactly where AI is weakest. Repeated tasks have clear rules and predictable structure - exactly where AI is strongest. Start where the wins are easy to prove.

Letting AI cross the judgment boundary. AI on first-pass review, formatting, extraction - strong. AI making final client-facing decisions without human review - dangerous. The boutique legal team kept lawyers as the final gate. That's the pattern.

Keeping AI gains inside your own work. You speed up your tasks, but no one else benefits. No handoffs get faster. No coordination costs drop. That's the solo-work ceiling. The real value comes when the redesigned workflow extends into team handoffs, shared processes, and cross-functional work.

Final Thought

The people getting disproportionate value from AI aren't the ones who know the most tools. They're the ones who picked one workflow, redesigned it, and can show you the numbers.

That's not an AI skill. It's a work skill. AI just made it visible.

WORK WITH US

The Other 95%

Knowing how to prompt well is roughly 5% of what it means to actually work with AI. The other 95% - context architecture, workflow design, thinking behaviors, tool orchestration - is where your workday actually changes. Not "I got a better first draft." More like "I rebuilt how my team runs weekly reporting, and now it takes 12 minutes instead of 4 hours."

That's what we work on with professionals and teams through Work in Beta.

For individuals: We help you build the skills, context files, and workflows that turn AI from a chatbot into a system that actually runs your work.

For organizations: We help teams design the AI operating systems - skills, workflows, context architecture - that make adoption stick beyond "everyone has a login."

If you're curious what the other 95% looks like, reach out to us here.